perception - sensor data fusion research and development

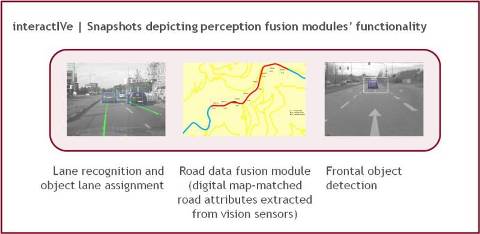

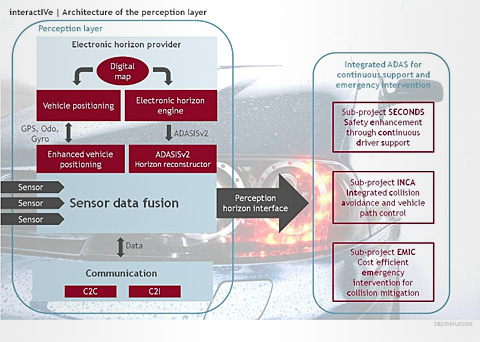

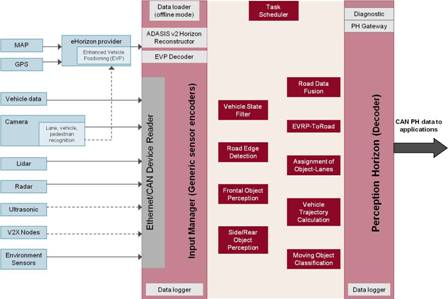

Perception has advanced the multi-sensor approaches and focus on sensor data fusion processes. A common perception framework for multiple safety applications and the unified output interface from the perception layer to the application layer have been developed.

Perception has integrated different information sources like sensors, digital maps, and communications while the range of possible scenarios and the usability of ADAS by multiple integrated functions and active interventions was extended. The development of an innovative model and platform for enhancing the perception of the traffic situation in the vicinity of the vehicle have enabled improved strategies for active safety and driver-vehicle-interaction.

Update 2 (March 2013)

The successful implementation of the Reference Perception Platform

The final interactIVe information, warning and intervention (IWI) strategies have been developed and documented in Deliverable D3.2. The strategies refer to how, when and where driver information, warnings, and interventions should be activated. They represent a set of guidelines and recommendations for the targeted interactIVe demonstrator vehicles. The goal is to achieve compatibility between driver and automation as well as a coherent, integrated and efficient driver-vehicle interaction design. The IWI strategies were structured according to the following strategy aspects:

- Layer of driving task

- Level of assistance and automation

- Situation awareness, mental model, mental workload, trust

- Range of operation and availability

- Communication channel

- Sequence of interaction

- States, modes and mode transitions

- Communicate system status

- Arbitration

- Prioritisation and scheduling

- Adaptivity and adaptability.

Apart from developing strategies, different concepts and tools were introduced and discussed. Additionally, a comprehensive framework for a idealgraphic layout of the vehicle cockpit visual HMI including detailed design drafts for the various visual and acoustic elements was developed. All the IWI strategies, design concepts and tools, and the visual and acoustic elements were applied to the various interactIVe demonstrator vehicles. As a result, the demonstrator vehicles feature individual manufacturer-specific design details while sharing the same underlying strategies, concepts and basic elements of the interaction design.

Update 1.0 May (May 2012)

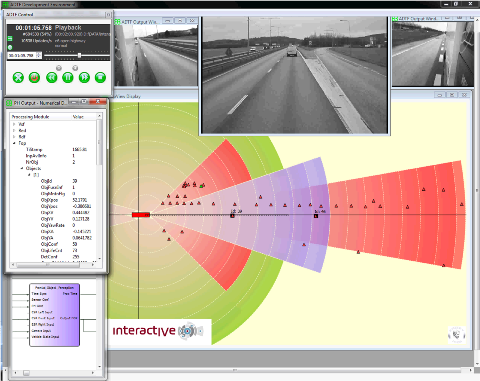

The sub-project �Perception� continued to develop the perception platform. The software engineers have focused on the reference perception platform�s implementation.

The reference perception platform served:

- as a software environment in which the perception modules are integrated into and

- as a common framework to execute their algorithms in.

The reference perception platform design adhered to the functional, software, hardware and perception horizon requirements for perception platform modules that were developed in co-operation with the testing partners.

The main challenge was for the reference platform to comply with the time limits set by the interactIVe applications (execution cycle close to real-time) on condition that the perception modules can meet their tracking and information fusion targets. In addition to the basic reference perception platform components, several auxiliary components have been implemented. These components provided tools to visualize and monitor the platform�s outputs.

Interview with perception experts (December 2010)

During the first year the perception requirements on sensor interfaces, sensor data fusion, and the perception horizon interface were defined. In an interview the leaders of the project�s largest sub-project �Perception� talk about their work within interactIVe.

What is your specific task in interactIVe?

Uri Iurgel, Delphi: We ensure that the individual contributions of the partners fit together to deliver a successful perception platform. The challenge is to ensure that the numerous requirements, software modules, interfaces, concepts and conditions, fit within one framework.

Angelos Amditis, ICCS: The perception platform is the system�s brain, responsible for real-time merging of information coming from obstacle sensors, digital maps, and car-to-car as well as carto- infrastructure communication in order to provide the applications side with a unified interpretation of the road environment. As a research unit, the ICCS I-Sense team supports manufacturers and suppliers with the development of data fusion algorithms and tools for the perception platform modules.

What is the particular challenge of your work on the perception platform?

Uri Iurgel: In the predecessor project PReVENT the individual demonstrator cars used independent approaches, which led to duplicated work. One of the main goals of our work now is to deliver a novel common perception framework.

Angelos Amditis: The novelty lies in the design of a common fusion framework that will allow integrating multiple applications into a unified perception framework. That includes the design of standardised interfaces between multiple sensor inputs and the perception platform as well as between the perception platform and the applications.

Uri Iurgel: A new, unified perception horizon interface will deliver information to the applications. Sensors will be attached using the novel concept of a general sensor interface, allowing to obtain various types of input information such as classical sensors, digital maps, and communications.

Angelos Amditis: New sensor data fusion approaches allowing access to lower level information will contribute to enhanced road environment monitoring and will be evaluated in interactIVe demonstrator vehicles.

How will interactIVe perception technologies improve driver support and active safety systems?

Uri Iurgel: The interactIVe technology will lead to a more robust and more reliable perception of the vehicle�s surroundings. Integrating functions, which were previously stand-alone, will largely increase the number of situations where the driver can benefit from the safety and support applications.

Angelos Amditis: interactIVe will contribute to a broader market penetration of the ADAS systems in lower-class vehicles. Monitoring the road environment based on multiple sensors and sensor fusion concepts is expected to enhance both continuous support and active safety in-vehicle applications. Through the early introduction of new active safety features, Europe will establish and maintain world-wide safety standards for road transportation.

The interview was conducted by EICT.

![]() perception

perception

by the European Commission